Good to know that every time I feel the need to use ALGOL 68, I must remember to disable ligatures. Still not sure this is going to be a huge problem 😂

- 3 Posts

- 77 Comments

Well, that was something… I have used ligatures in my code editor for quite a few years now, and I have NEVER been confused about the ambiguity this person is so upset about. Why? I have never ever seen the Unicode character for not equals in a code block, simply since it is not a valid character in any known language. In fact, I have never even seen it in a String where it actually would be legal, probably since nobody knows how to type that using a standard keyboard. This whole article felt like someone with a severe diagnose have locked in on some hypothetical correctness issue, that simply isn’t a problem in the real world.

But, if you for some reason find ligatures confusing, then you shouldn’t use them. But, just to be clear, there is not a right of wrong like this blog post tries to argue, it is a matter of personal taste.

Splits, ligatures tabs and more

Cosmic term is nice. Still just alpha, so there are rough edges though.

8·2 months ago

8·2 months agoFor Boomers, cars was the latest tech that everyone was fiddling with. This caused even the boomer that wasn’t very interested , to know quite a lot. For later generations, car became more of a means of transportation, and the knowledge of cars was only for specialists. For gen X, computers were the high tech thing, everyone was fiddling with. Most gen x can setup a printer if they have to. For later generations, computers are just tools, and the knowledge is only for specialists.

18·2 months ago

18·2 months agoProducing products that the users wants, and that solves tje users real problems. And not trying to make products as addictive as possible, to harvest as much user data as possible to sell.

16·2 months ago

16·2 months agoThe problem is that C is a prehistoric language and don’t have any of the complex types for example. So, in a modern language you create a String. That string will have a length, and some well defined properties (like encoding and such). With C you have a char * , which is just a pointer to the memory that contains bytes, and hopefully is null terminated. The null termination is defined, but not enforced. Any encoding is whatever the developer had in mind. So the compiler just don’t have the information to make any decisions. In rust you know exactly how long something lives, if something try to use it after that, the compiler can tell you. With C, all lifetimes lives in the developers head, and the compiler have no way of knowing. So, all these typing and properties of modern languages, are basically the implementation of your suggestion.

3·2 months ago

3·2 months agoIt is making the tracking protection part of containers obsolete, this is basically that functionality but built in and default. The containers still let you have multiple cookie jars for the same site, so they are still useful if you have multiple accounts on a site.

7·2 months ago

7·2 months agoContainer tabs are still useful, as they let you use multiple Cookie jars for the same site. So, it is very easy to have multiple accounts on s site.

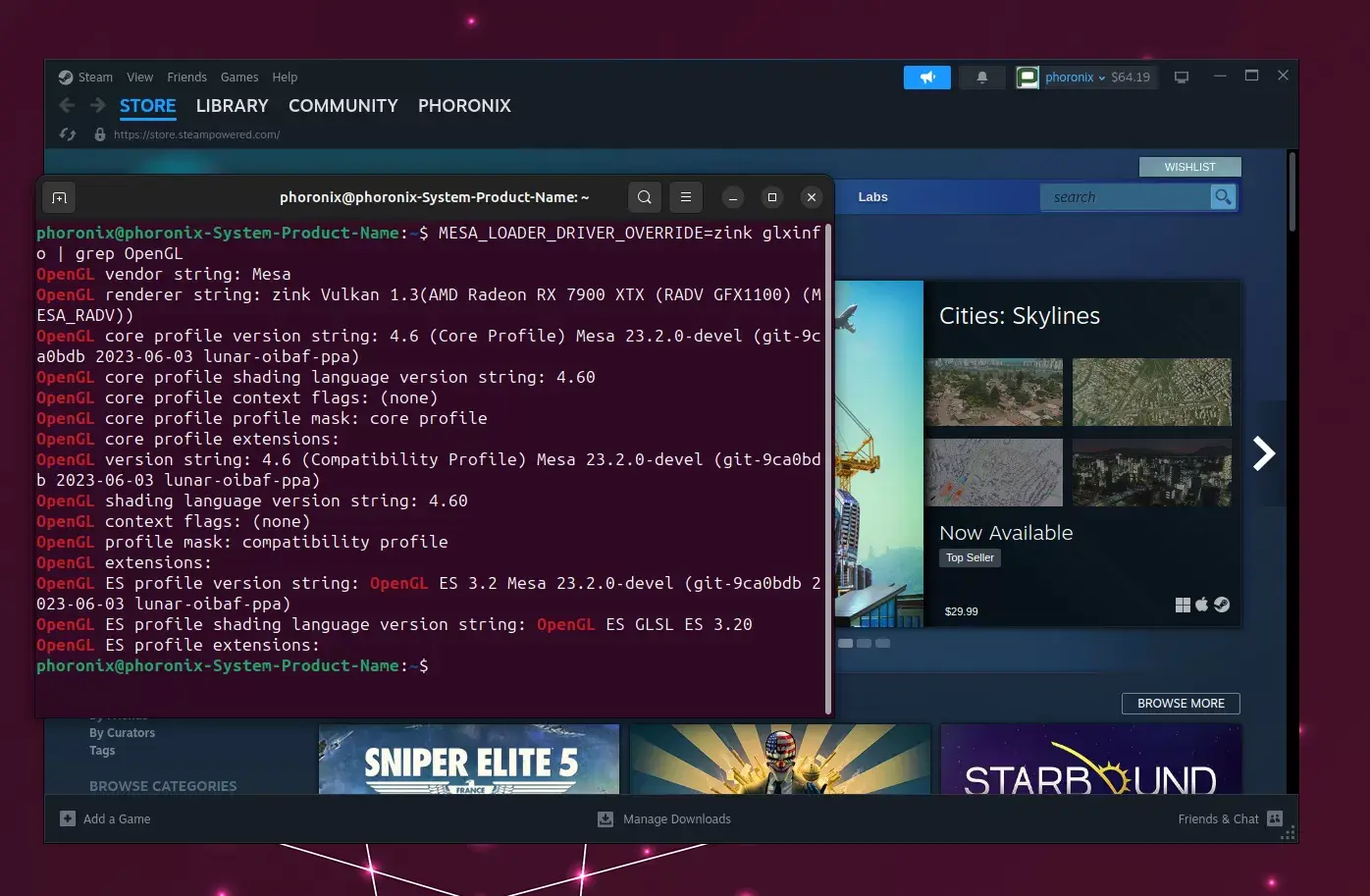

16·4 months ago

16·4 months agoComment about image

30·4 months ago

30·4 months agoNo, it is not based on Gnome. It is a full DE environment written in rust.

41·5 months ago

41·5 months agoThe problem with assassin the Russian economy, is to do it faster then it commit suicide.

12·5 months ago

12·5 months agoNot the latest, but one of the biggest improvements was the Ultimate Hacking Keyboard. Now I have programmed the keyboard to have VIM navigation at the keyboard level. The latest was switching to neovim and setting it up properly.

402·6 months ago

402·6 months agoYou are confusing Google and Internet… they are very different things.

121·6 months ago

121·6 months agoHad to test with Kagi also, leads with official documentation, after that tutorials and unofficial things. Nothing obviously irrelevant. The only thing with the Kagi results, was that there were a few very simmilar official documentation links (for different postgresql versions) at top. But, still good search results. Not sure why anyone is still using google, when there are quite a few better alternatives availale

9·7 months ago

9·7 months agoThis is obvious for people who understand the basics of LLM. However, people are fooled by how intelligent these LLM sounds, so they mistake it for actually being intelligent. So, even if this is an open door, I still think it’s good someone is kicking it in to make it clear that llms are not generally intelligent.

2·8 months ago

2·8 months agoThat’s why it felt very early to have used it before it was default, I mean before 2016 felt too early for me… But it was way before Covid, so I’d say around 2017.

2·8 months ago

2·8 months agoI know I have used it since Fedora made it default in 2016. I think I actually used it a while before that, but I don’t have any thing to help me pin down the exact time.

Since I only use Intel built-in GPU, everything have worked pretty well. The few times I needed to share my screen, I had to logout and login to an X session. However, that was solved a couple of years ago. Now, I just wait for Java to get proper Wayland support, so I fully can ditch X for my daily use and get to take advantage of multi DPI capabilities of Wayland.

I have been a vim user for more than 20 years. I tried to quit for a couple of years, but now I have just accepted my faith.

Are you saying that it is common that people use utf8 characters that you cannot easily type on a standard keyboard? I’m very skeptical of this claim.